When AI Becomes Command-and-Control Infrastructure

Understanding the emerging risk of AI-mediated malware communication

Artificial intelligence systems are increasingly embedded into everyday workflows. From development environments and search assistants to productivity tools and customer support platforms, AI services now routinely interact with the web on behalf of users.

While this capability enables powerful automation and information retrieval, security researchers are beginning to demonstrate a concerning possibility: AI services themselves may become unintended infrastructure for malware communication.

Recent proof-of-concept research shows that AI assistants with web-browsing capabilities can be manipulated into acting as a covert command-and-control relay for malicious software. Rather than communicating directly with attacker infrastructure, malware can route requests through legitimate AI services, making malicious traffic appear indistinguishable from normal user activity.

This technique reflects a broader shift in attacker behavior — one where trusted cloud platforms and AI services are abused to evade traditional detection mechanisms.

How the Attack Works

In traditional malware operations, compromised systems communicate directly with a command-and-control (C2) server operated by the attacker. Security tools commonly detect this activity by identifying suspicious domains, flagging unusual outbound traffic, or correlating connections to known malicious IP addresses.

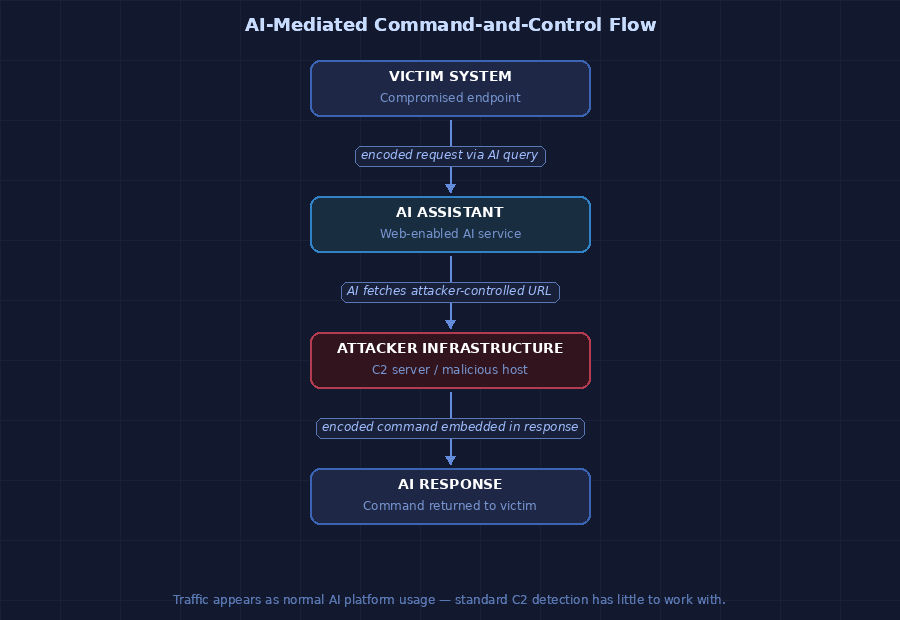

The AI-mediated model changes this architecture fundamentally.

Instead of contacting attacker infrastructure directly, the malware interacts with an AI service that has web access capabilities. The AI assistant fetches content from attacker-controlled URLs and returns responses that contain encoded instructions. In this model, the AI platform functions as an intermediary — passing data between the infected machine and the attacker without either endpoint communicating directly.

A simplified flow looks like this:

- The malware encodes victim data inside URL parameters and routes them through the AI assistant's web request.

- The attacker's server interprets the inbound request, generates instructions, and embeds the next command inside a web response.

- The AI assistant processes that response and returns it to the infected system — all without the communication ever resembling a traditional C2 exchange.

Because the traffic flows through a legitimate, widely used AI platform, standard network-based detection has very little to work with.

Why This Matters

Security teams traditionally rely on several techniques to identify command-and-control activity:

- Monitoring connections to suspicious or uncategorized domains

- Detecting beaconing patterns in outbound traffic

- Blocking known malicious IP addresses

- Analyzing anomalous traffic volumes or timing

If malware communicates through a trusted AI platform, those indicators become far less effective. From a network monitoring perspective, the system appears to be doing exactly what it should — interacting with a legitimate AI service in routine use across the organization.

Researchers also suggest that future malware may increasingly rely on AI-driven behavioral logic rather than static programming. Instead of embedding rigid instructions, attackers could dynamically generate commands using prompts and model responses. This could enable malware to:

- Adapt its behavior based on the victim environment

- Identify high-value data before exfiltration

- Evade sandbox analysis

- Prioritize targets during ransomware operations

In effect, malware could become significantly more adaptive than traditional static payloads — with the AI platform doing much of the decision-making work.

The Broader Pattern: Abusing Trusted Infrastructure

Abusing trusted platforms for malicious communication is not a new tactic. Attackers have previously used social media platforms for command delivery, cloud storage services for malware staging, and enterprise messaging tools for data exfiltration. In each case, the core strategy is the same: blend malicious traffic into legitimate activity that defenders are unlikely to block.

AI platforms represent the newest addition to this pattern — and they introduce complications that earlier abused services did not. Because AI systems actively interpret content, generate responses, and perform web requests autonomously, they can inadvertently act as intelligent intermediaries rather than passive relays. That distinction matters when assessing how difficult these communication channels will be to detect and disrupt.

As AI capabilities expand into enterprise workflows, new attack surfaces are likely to emerge — including prompt injection, automated decision-making abuse, and integration with sensitive internal systems. Security teams should treat AI platforms as they would any other external cloud service: monitor usage patterns, audit integrations, and apply appropriate access restrictions.

Defensive Takeaways

Organizations adopting AI-enabled platforms should consider the following practices:

Monitor automation patterns

Unexpected or high-frequency automated requests to AI services may indicate abuse. Establish usage baselines and flag deviations.

Limit unnecessary AI integrations

Restrict AI systems from accessing sensitive environments or executing automated workflows without explicit oversight.

Implement behavioral monitoring

Endpoint telemetry and anomaly detection can help identify unusual automation behavior that network monitoring alone may miss.

Apply zero-trust principles

Treat AI platforms as external services. Restrict their access to internal systems unless explicitly required and reviewed.

Educate developers and users

Awareness of prompt injection and AI misuse reduces risk during both development and day-to-day deployment.

Final Thoughts

Artificial intelligence is rapidly becoming part of the modern computing ecosystem. As with any widely adopted technology, it will attract the attention of adversaries looking for new ways to bypass security controls.

The AI-as-relay model is still experimental, but the underlying pattern is not. Attackers consistently find ways to weaponize trusted infrastructure — and AI platforms are the newest addition to that list. Defenders who understand that dynamic are better positioned to adapt their monitoring before the technique matures.

That means security monitoring must evolve beyond simple indicators of compromise and focus on behavioral patterns and system context. Understanding how emerging technologies can be misused is how defenders stay ahead — not just how they catch up.